Human-Centric Spatial Augmented Reality for Interactive (Dis)assembly Operator Assistance

Developing a computer vision-enabled Spatial Augmented Reality framework for intelligent, privacy-preserving operator assistance in human-centric smart (dis)assembly.

Overview

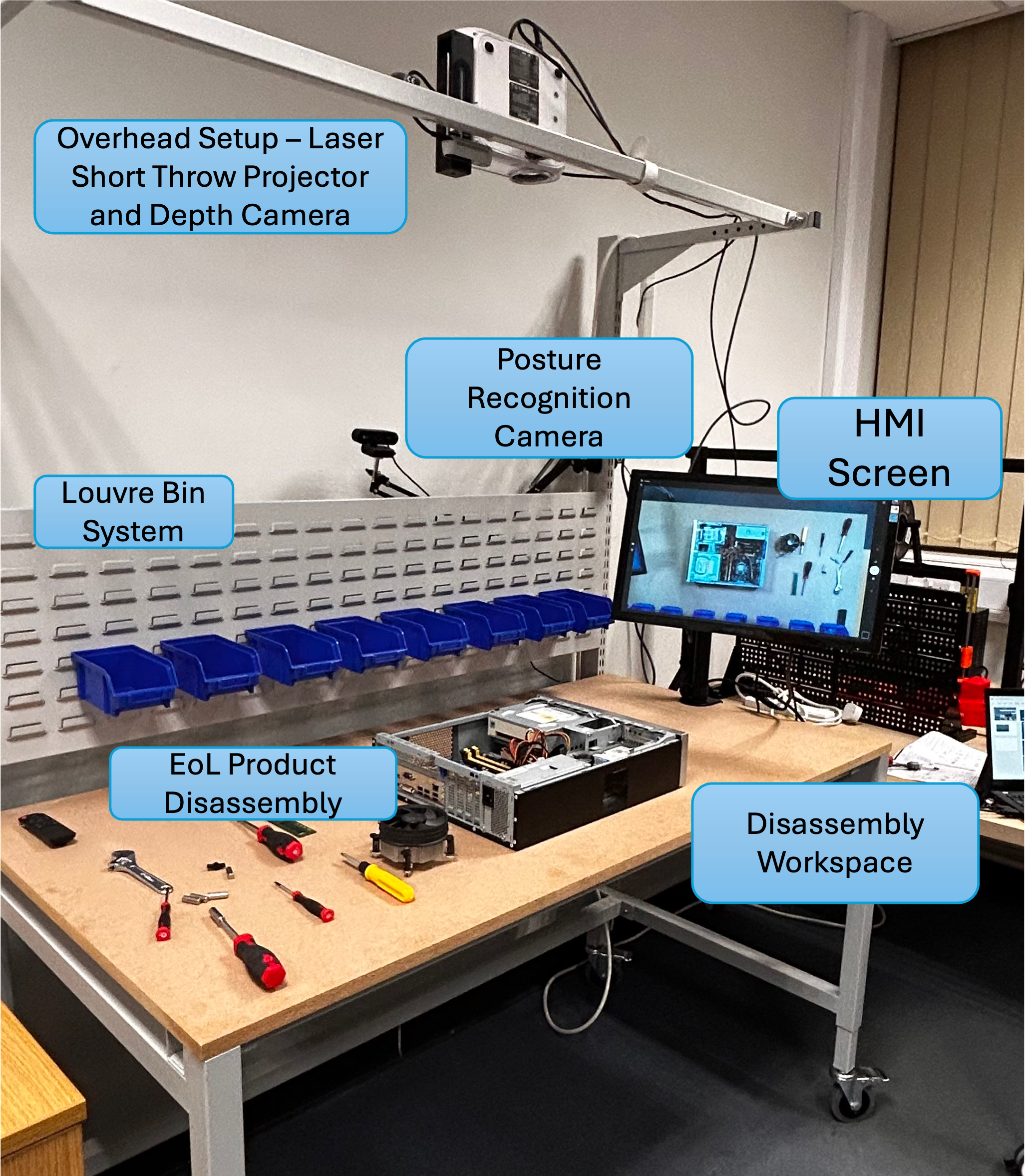

This project develops a human-centric Spatial Augmented Reality (SAR) system that projects adaptive, light-guided (dis)assembly instructions directly onto the workspace and is controlled through AI-based hand gesture recognition. The system delivers real-time guidance and error feedback without requiring handheld or wearable devices. Beyond guidance, the framework is designed as an intelligent operator assistance system that augments physical and cognitive capabilities, informing users about posture-related physical risk and cognitive state (e.g., workload) while protecting operator identity via privacy-by-design sensing and data handling. User studies show significant reductions in task time, error rates, and perceived workload compared to conventional instruction methods.